Install CentOS 8 / Stream in Parallels Desktop #centos #parallels #macos

Wed, Nov 17 2021, 18:41 Linux, Mac, macOS, networking, server, Unix, Webserver Permalink |

I wrote a short how-to on how to create CentOS 8 VM in Parallels Desktop for Mac, for local development and testing.

Comments

Setup your local macOS X web server #apache #macos #macosx #webserver #localhost #webdevelopment

Sun, Jun 24 2018, 09:55 Apple, Mac OS X, networking, PHP, programming, server, Unix, Webserver PermalinkI've put together a page where I describe how you can setup a web development environment with two or more Apple Macs.

If you are a web developer working on Apple Macs, you sure do want to use the macOS X built-in web server, Apache, on all your Macs. And you want to be able to access your Sites folder, local web documents folder and your other Mac via HTTP.

I'll describe how to set it up on macOS High Sierra (10.13.5). If you're on a previous version of macOS X, not to worry, the steps to take are practically identical. Read on ...

If you are a web developer working on Apple Macs, you sure do want to use the macOS X built-in web server, Apache, on all your Macs. And you want to be able to access your Sites folder, local web documents folder and your other Mac via HTTP.

I'll describe how to set it up on macOS High Sierra (10.13.5). If you're on a previous version of macOS X, not to worry, the steps to take are practically identical. Read on ...

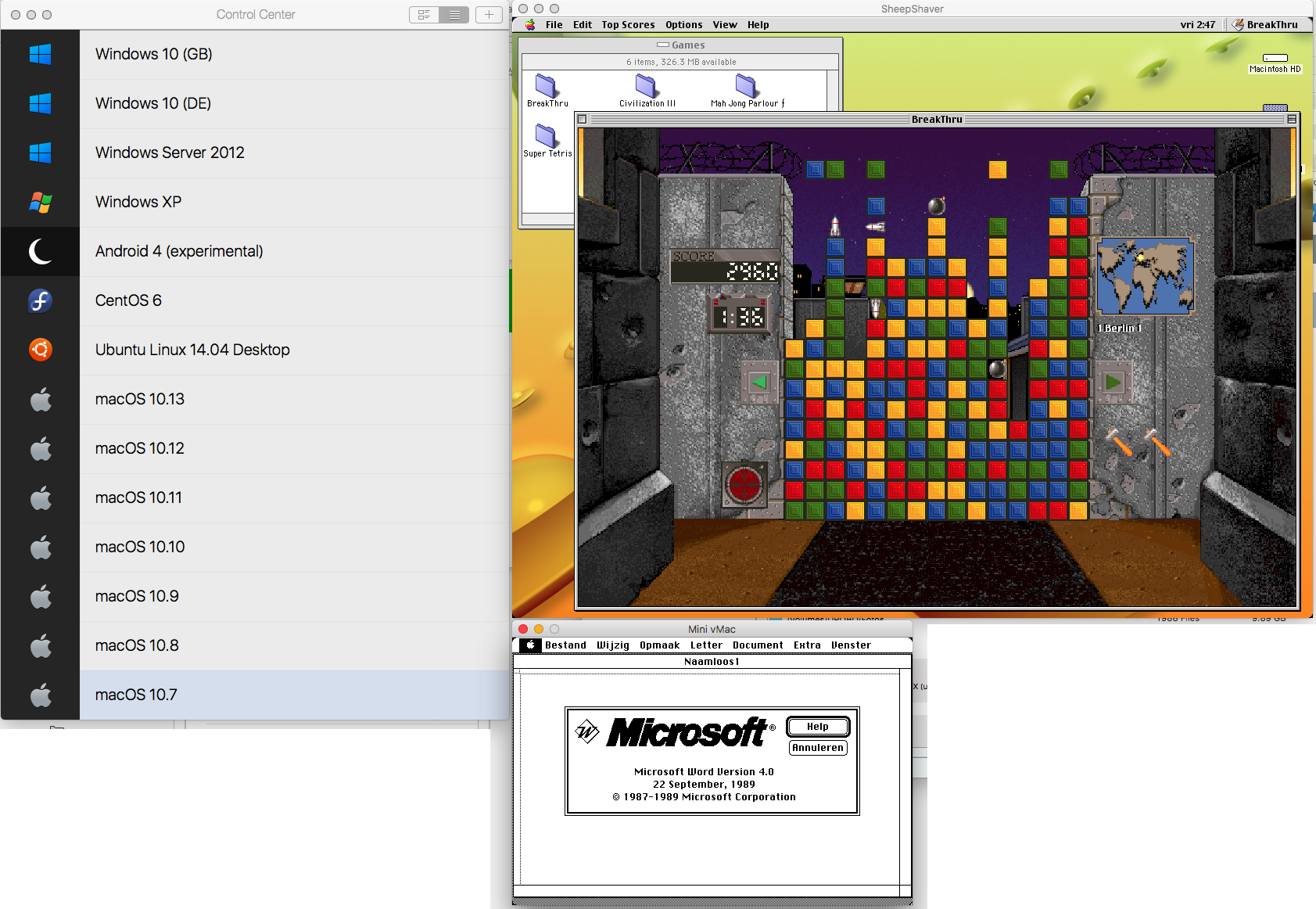

I love the invention of virtualization

Fri, Mar 30 2018, 11:09 Apple, Mac OS X, server, software, Unix, Windows PermalinkApache vhost sort order on CentOS

Fri, Oct 23 2015, 11:33 Linux, server, Unix, Webserver PermalinkI’ve written a page on how to control the order of Apache vhosts [on CentOS]. Just for reference.

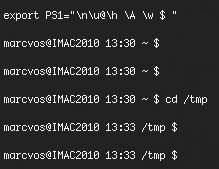

Cool Unix-Shell Prompt

Tue, Nov 04 2014, 13:25 Linux, Mac OS X, Unix Permalink| At the shell-prompt in Terminal or some SSH session, copy/paste the following line: export PS1="\n\u@\h \A \w $ " and see the following nice shell-prompt:  | You will have the following information always present:

|

Quickly transfer MySQL databases to a new server

Fri, Aug 08 2014, 10:36 Database, Linux, Mac OS X, MySQL, Unix PermalinkAgain I needed to transfer all data from one server to another. I knew I documented the transfer of MySQL databases somewhere (it's deep inside in the Replication-how-to) and decided to post them again here, so they're quicker to find.

One can transfer MySQL databases in various ways:

$ cd /var/mysql/ (or /var/lib/mysql/)

$ sudo zip -r ~/[database].zip [database]

Do this for each database that you want to copy. Then send all zip's per FTP to the new server.

Start an SSH session with the remote server and enter the following commands:

$ cd /var/mysql (or /var/lib/mysql/)

$ sudo unzip ~/[database].zip

$ sudo chown -R _mysql:admin [database]

For the above chown, check first with ls -l if _mysql:admin are the right owners. Then do this for each unzipped database.

Next, start Navicat and now you should see your databases in the connection of the new server. If not, you probably forgot to either do a Refresh Connection or the chown-command.

If you can access the tables and view data, good! If not, right click the table and choose Maintain->Repair Table->Quick or ->Extended and then try again.

One can transfer MySQL databases in various ways:

- Using mysqldump and zip + ftp

- Zip the database itself + ftp (you might need to repair the tables after unzipping)

- Use Navicat's Data Transfer module (not always good for tables with millions of records or blob data)

$ cd /var/mysql/ (or /var/lib/mysql/)

$ sudo zip -r ~/[database].zip [database]

Do this for each database that you want to copy. Then send all zip's per FTP to the new server.

Start an SSH session with the remote server and enter the following commands:

$ cd /var/mysql (or /var/lib/mysql/)

$ sudo unzip ~/[database].zip

$ sudo chown -R _mysql:admin [database]

For the above chown, check first with ls -l if _mysql:admin are the right owners. Then do this for each unzipped database.

Next, start Navicat and now you should see your databases in the connection of the new server. If not, you probably forgot to either do a Refresh Connection or the chown-command.

If you can access the tables and view data, good! If not, right click the table and choose Maintain->Repair Table->Quick or ->Extended and then try again.

ProFTPD with MySQL backend

Fri, Jul 18 2014, 16:23 FTP, Linux, MySQL, server, Unix PermalinkI know there are already many pages about ProFTPD and MySQL, but all info I needed was scattered over the Internet.

Therefore I collected all info I needed and put into one page: Setup ProFTPD and MySQL.

Therefore I collected all info I needed and put into one page: Setup ProFTPD and MySQL.

Daily Script on Mac OS X Server did not clean up /tmp

Wed, Oct 31 2012, 18:27 Apple, Mac OS X, server, Unix PermalinkLately my /tmp folder was piling up with files (krb5cc*) without any signals that these files were regularly deleted. A bit of googling showed that these come from the Open Directory Server, but that's something I cannot control. So I went to investigate why the daily script would not delete them. I googled a bit again and found out where the parameter file for the daily, weekly and monthly cleanup-scripts is located: /etc/defaults/periodic.conf. There, I found these settings for /tmp :

# 110.clean-tmps

daily_clean_tmps_enable="YES" # Delete stuff daily

daily_clean_tmps_dirs="/tmp" # Delete under here

daily_clean_tmps_days="3" # If not accessed for

daily_clean_tmps_ignore=".X*-lock .X11-unix .ICE-unix .font-unix .XIM-unix"

daily_clean_tmps_ignore="$daily_clean_tmps_ignore quota.user quota.group"

# Don't delete these

daily_clean_tmps_verbose="YES" # Mention files deleted

The one to look for is where it says "3". This indicates that the routine should clean up old files not accessed for 3 days. But it did not - and the files were not mentioned in the ignore-parameters. Even rm -rf krb5cc* returned immediately an error that its argument list was too long. Therefore I started reading what the exact values for this parameter should be.

Well, it turns out that the value needs a qualification, like d(ays) or m(months), etc.. I found that out by reading /etc/periodic/daily/110.clean-tmps and studying how find uses -atime, -ctime and -mtime and how to add or subtract values. Here are a few find-commands, copied from /etc/periodic/daily/110.clean-tmps, which I tried to make sure that what I just read was right:

$ cd /tmp

$ sudo find -dx . -fstype local -type f -atime +1h -mtime +1h -ctime +1h

$ sudo find -dx . -fstype local -type f -atime +1d -mtime +1d -ctime +1d

$ sudo find -dx . -fstype local -type f -atime +2d -mtime +2d -ctime +2d

Further reading suggested to use override-files, so I sudo'd into vi to create the file /etc/periodic.conf with the following contents:

daily_clean_tmps_days="2d"

Yes, 2 days. Three days is too long for a server, in my opinion. The file's attributes look like this:

marcvos @ ~ $ ls -l /etc/periodic.conf

-rw-r--r-- 1 root wheel 27 Oct 25 16:38 /etc/periodic.conf

Next, delete the file daily.out:

$ sudo rm /var/log/daily.out

Reboot the server. Check your /tmp folder and /var/log/daily.out the next days.

With me, I now finally saw all those files getting deleted.

# 110.clean-tmps

daily_clean_tmps_enable="YES" # Delete stuff daily

daily_clean_tmps_dirs="/tmp" # Delete under here

daily_clean_tmps_days="3" # If not accessed for

daily_clean_tmps_ignore=".X*-lock .X11-unix .ICE-unix .font-unix .XIM-unix"

daily_clean_tmps_ignore="$daily_clean_tmps_ignore quota.user quota.group"

# Don't delete these

daily_clean_tmps_verbose="YES" # Mention files deleted

The one to look for is where it says "3". This indicates that the routine should clean up old files not accessed for 3 days. But it did not - and the files were not mentioned in the ignore-parameters. Even rm -rf krb5cc* returned immediately an error that its argument list was too long. Therefore I started reading what the exact values for this parameter should be.

Well, it turns out that the value needs a qualification, like d(ays) or m(months), etc.. I found that out by reading /etc/periodic/daily/110.clean-tmps and studying how find uses -atime, -ctime and -mtime and how to add or subtract values. Here are a few find-commands, copied from /etc/periodic/daily/110.clean-tmps, which I tried to make sure that what I just read was right:

$ cd /tmp

$ sudo find -dx . -fstype local -type f -atime +1h -mtime +1h -ctime +1h

$ sudo find -dx . -fstype local -type f -atime +1d -mtime +1d -ctime +1d

$ sudo find -dx . -fstype local -type f -atime +2d -mtime +2d -ctime +2d

Further reading suggested to use override-files, so I sudo'd into vi to create the file /etc/periodic.conf with the following contents:

daily_clean_tmps_days="2d"

Yes, 2 days. Three days is too long for a server, in my opinion. The file's attributes look like this:

marcvos @ ~ $ ls -l /etc/periodic.conf

-rw-r--r-- 1 root wheel 27 Oct 25 16:38 /etc/periodic.conf

Next, delete the file daily.out:

$ sudo rm /var/log/daily.out

Reboot the server. Check your /tmp folder and /var/log/daily.out the next days.

With me, I now finally saw all those files getting deleted.

Using FTP in Lasso

Sat, Jul 10 2010, 22:04 AS400, FTP, iSeries, Lasso, Linux, Mac OS X, programming, Unix, Windows PermalinkI created a page about the various ways to use the FTP protocol with Lasso, from and to various systems. Find it here. I have been trying to get it work the last few days and now that it does, I wanted to share what you can do. Of course there are probably installations where it all works out-of-the-box, but this time not with me.

Transportation Administration System

Transportation Administration System Snoezelen Pillows for Dementia

Snoezelen Pillows for Dementia Begeleiders voor gehandicapten

Begeleiders voor gehandicapten Laat uw hond het jaarlijkse vuurwerk overwinnen

Laat uw hond het jaarlijkse vuurwerk overwinnen Betuweroute en Kunst

Betuweroute en Kunst Hey Vos! Je eigen naam@vos.net emailadres?

Hey Vos! Je eigen naam@vos.net emailadres? Kunst in huis? Nicole Karrèr maakt echt bijzonder mooie dingen

Kunst in huis? Nicole Karrèr maakt echt bijzonder mooie dingen Kunst in huis? Netty Franssen maakt ook bijzonder mooie dingen

Kunst in huis? Netty Franssen maakt ook bijzonder mooie dingen